AtomView

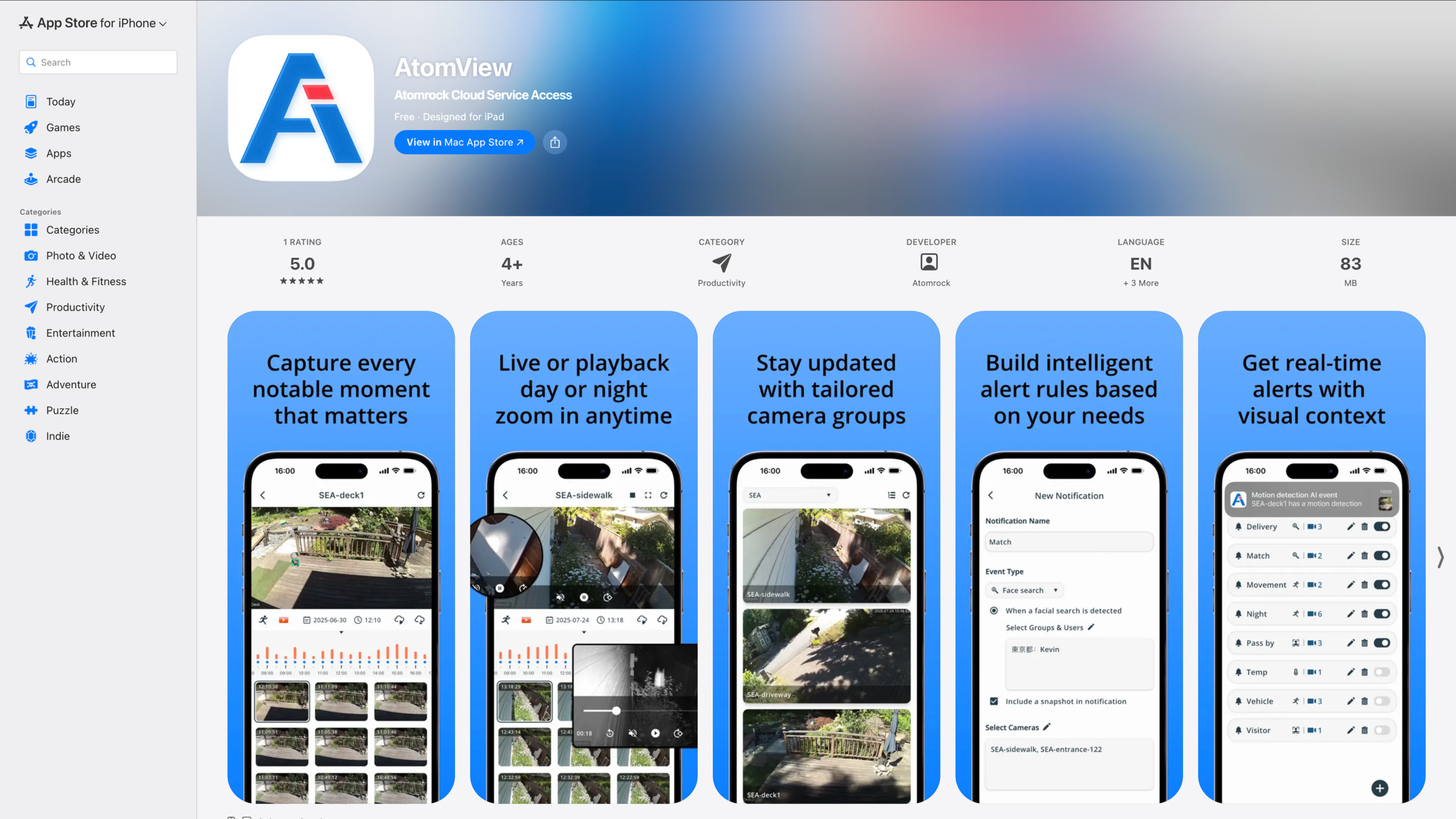

A fully functional mobile AI monitoring app, built from the ground up in Flutter + Firebase, extending Atomrock's existing web-based AI monitoring platform into an on-site, mobile-native experience.

I owned both design and engineering end-to-end: restructured the desktop workflows for a smaller screen, built the app solo in Flutter + Firebase, and integrated AI features (face and motion detection), live streaming, cloud playback, and image-based retrieval into one unified interface.

AtomView extends Atomrock's existing web-based AI monitoring and management platform into on-site operational scenarios. Instead of shipping a simplified companion app, I brought the web platform's core workflows (real-time tracking, event review, and clip management) into a mobile-native experience.

The goal: give on-site operators the same power as the web version, with interactions restructured for smaller screens and real-time contexts.

Mobile restructuring

- Made the static timeline scrollable to accommodate screen width and maintain continuous time navigation.

- Merged the grid-view pop-up into the snapshot preview: seamless event browsing without extra buttons or page transitions.

- Enabled two-way sync between the timeline bar and snapshot preview: selecting one automatically updates the other.

Project highlights

- Built the functional mobile app directly in Flutter + Firebase.

- Integrated APIs across products and systems, bridging frontend and backend.

- Integrated AI features (face and motion detection) for recording filtering and search.

- Unified mobile and desktop UIUX through consistent state and interaction models.

- Consolidated AI filtering, live streaming, cloud playback, historical search, and image-based retrieval into one compact, intuitive interface.

Design & Engineering

Wanling Yu

Company

Atomrock

Year

2025

Platforms

iOS & Android