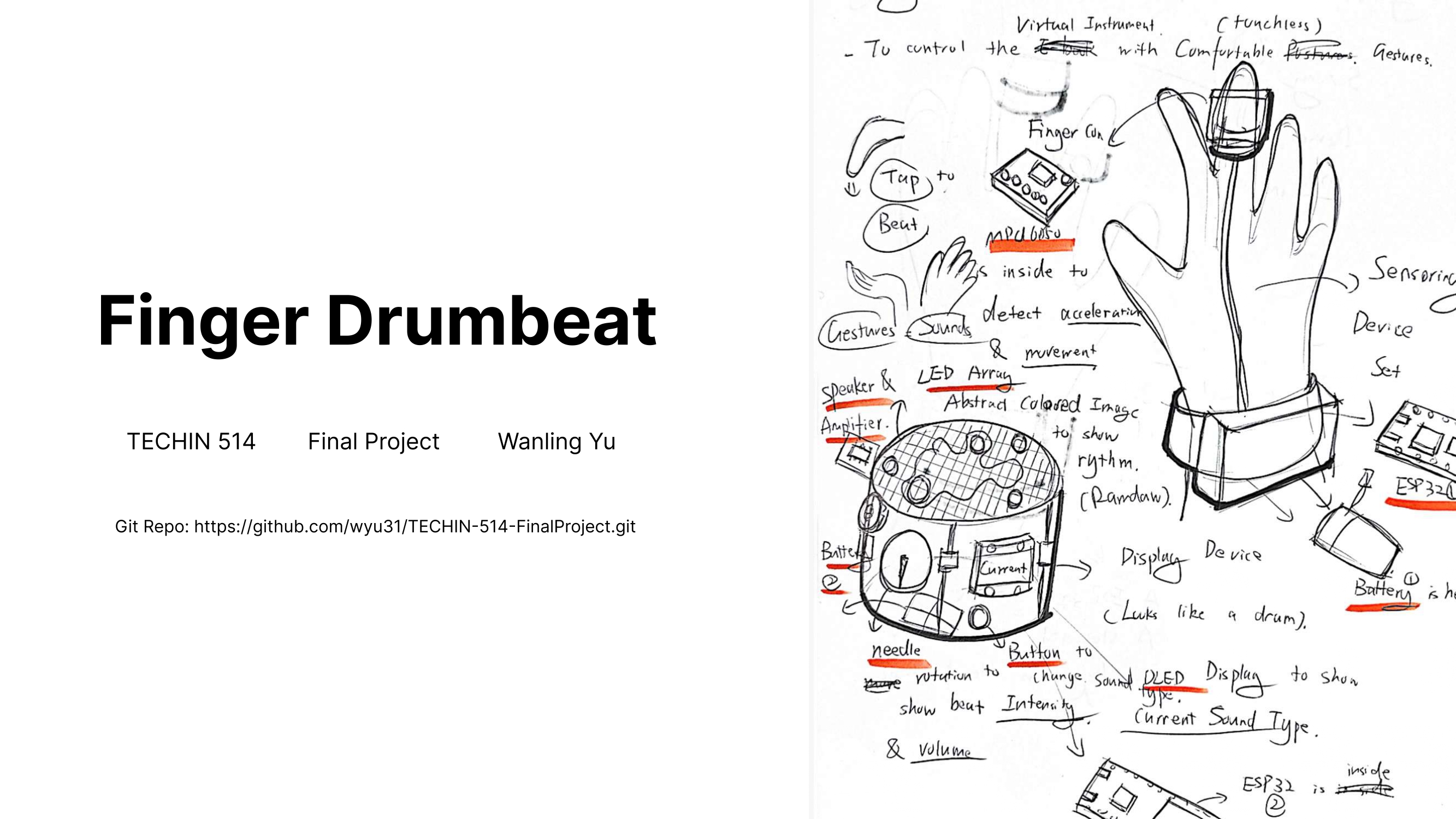

Finger Drumbeat

Designed, built, and programmed Finger Drumbeat, a self-built wearable gadget that lets users play virtual drums through finger gestures.

Finger swing force maps to sound intensity, gesture direction maps to drum type, and motion, audio, and visual feedback come together into one compact modular device.

A fully self-built project: I scoped the interaction concept, architected the embedded system, fabricated the PCB and enclosure, and wrote the gesture-mapping logic end-to-end.

Music enthusiasts often crave a deeper connection with their favorite tunes, yet attending live shows is not always feasible. Finger Drumbeat offers a tangible way to engage with music anytime and anywhere, letting users tap out rhythms with their fingertips and change drum types with a swipe of the other hand.

I used it as a testbed for how minimal hardware (MPU6050, APDS9960, ESP32) can deliver real-time, expressive interaction through embedded UX design.

What I built

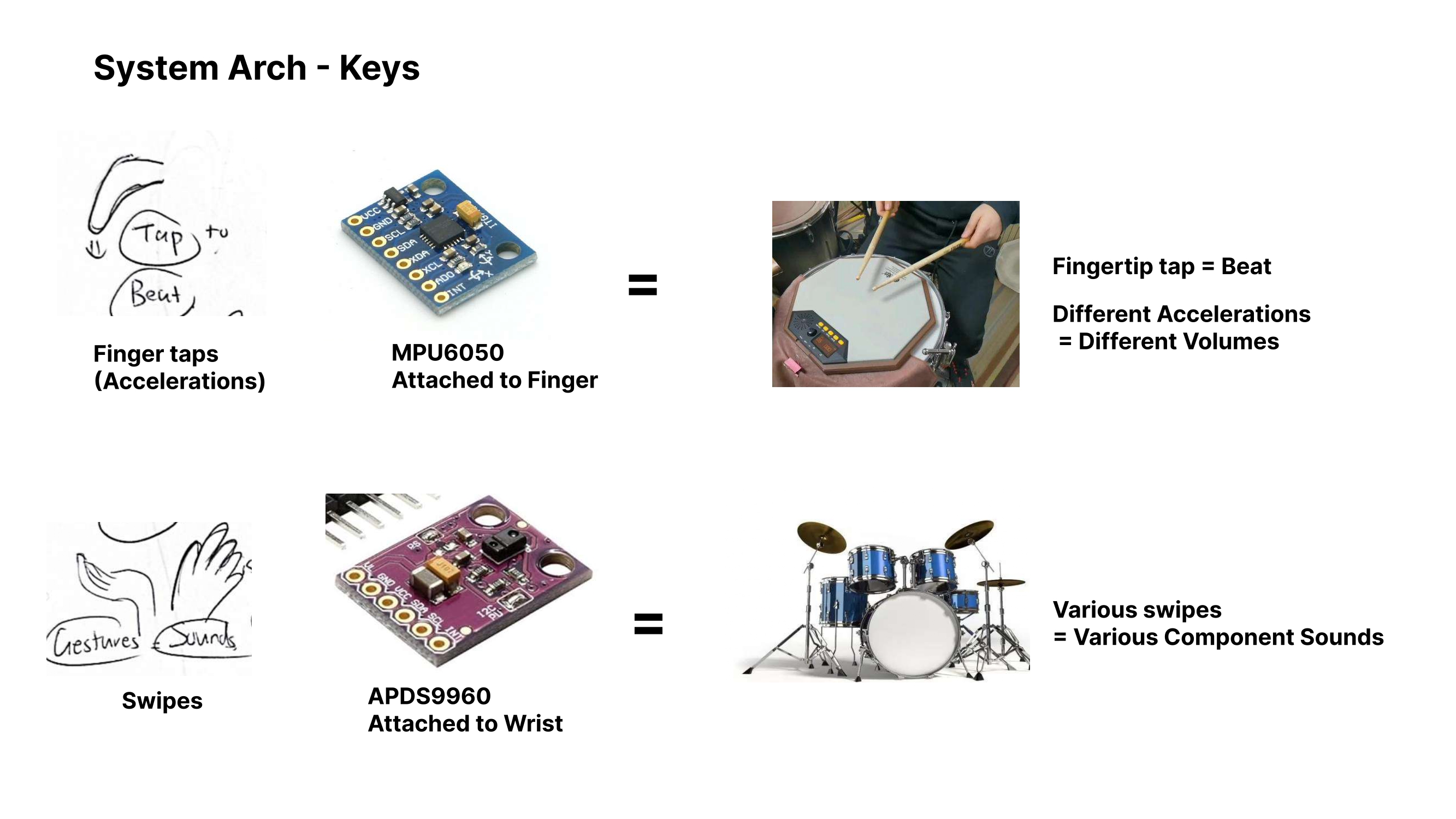

- Interaction concept: mapped fingertip taps to beats and hand swipes to drum types, so one pair of gestures drives a full virtual kit.

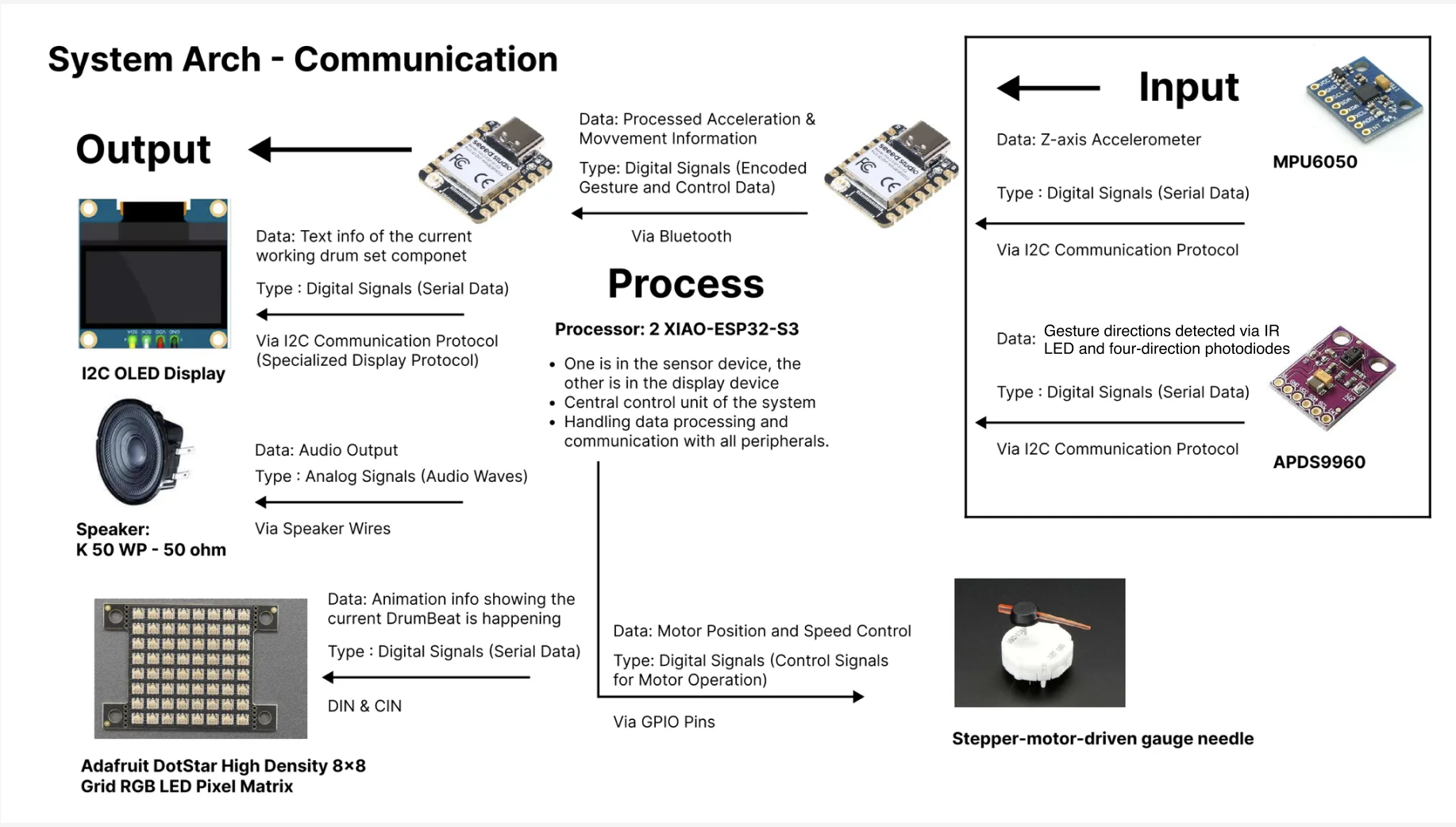

- Embedded system architecture: two XIAO ESP32-S3 boards communicating over Bluetooth, each managing its own sensors, audio, or display peripherals.

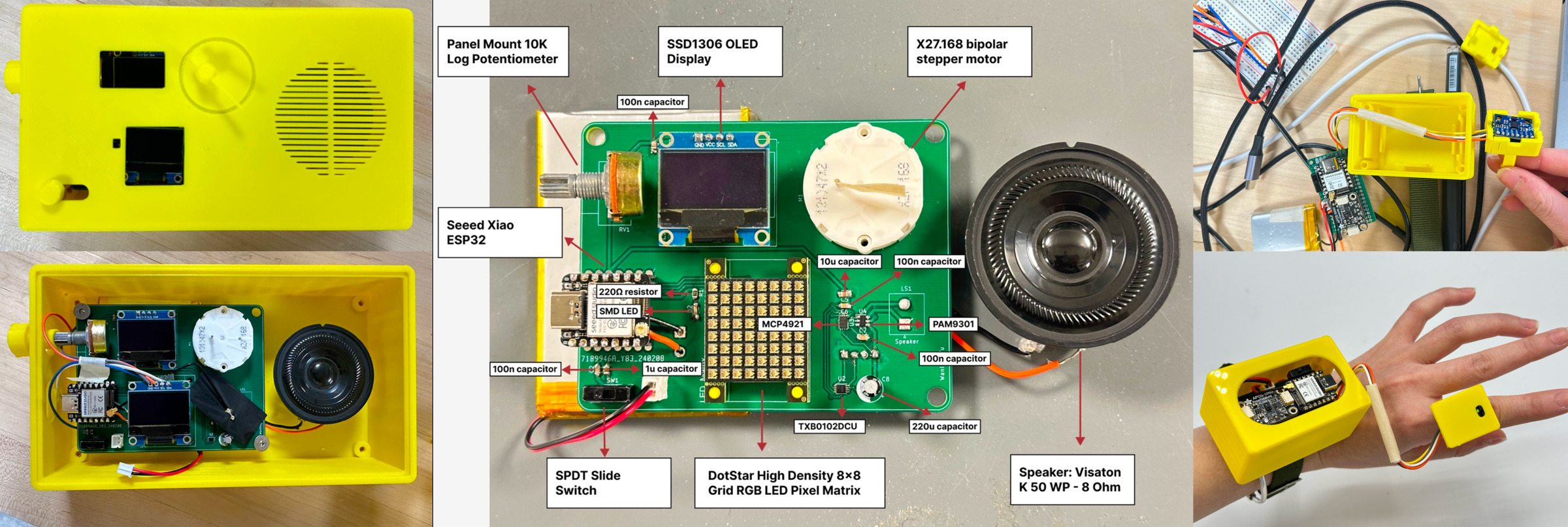

- Custom PCB, enclosure, and power system for wearable integration, packaged into a handheld display unit and a wrist sensor module.

- Modular firmware for gesture mapping, audio playback, and display synchronization, written so each subsystem can be tested and iterated on independently.

Multimodal feedback loop

- Z-axis finger acceleration (MPU6050) drives sound volume, LED matrix intensity, and stepper-motor gauge rotation, so the harder you tap, the louder, brighter, and more energetic the response.

- Gesture direction from the IR sensor (APDS9960) triggers drum-type changes, with the current drum surfaced in real time on the OLED display.

- Dynamic alpha low-pass filtering on the accelerometer smooths minor movements while staying responsive to intentional taps, so the device feels like an instrument rather than a twitchy sensor.

Project highlights

- Designed a complete embedded UX loop from sensor input to synchronized audio, light, and motion feedback.

- Built a custom PCB, 3D-printed enclosure, and portable power system for the wearable and handheld units.

- Developed modular firmware logic for gesture mapping, audio playback, and display sync, keeping each subsystem independently testable.

- Explored how low-cost, minimal hardware can deliver real-time multimodal interaction with instrument-grade responsiveness.

Role

Full-Stack Build

Interaction · System · Firmware

Context

UW GIX

Type

Individual Creative Project

Year

2024